Studies Archive

Using Machine Learning to Benchmark Hospitals Reveals Dissimilarities Among the Most Renowned U.S. Hospitals

September 11, 2022Key Takeaways

- The top five renowned U.S. hospitals are not necessarily peers, as evidenced by the variation in the results of the Aggregate SimilarityIndex™.

- Existing hospital benchmarking methodologies based on objective data are limited to quality measures, which restricts their ability to make complete comparisons.

- The subjective nature (e.g., reputation surveys) and data limitations of existing hospital benchmarking sources results in a false sense of perceived prestige among the nation’s “top” hospitals.

Historically, health economy stakeholders could not accurately identify relevant hospital peers with traditional hospital benchmarking resources, which rely primarily on quality measures coupled with subjective criteria. The existing benchmarking resources have received criticism from both clinicians and academics in past years, with one group of researchers citing prevalent issues across lists, including limited data, lacking data audits, and varying methods for compiling and weighting measures.1

To address this gap in the health economy, we recently introduced SimilarityIndex™ | Hospitals and its accompanying visualizer. Instead of ranking hospitals based on a mix of objective and subjective criteria, the SimilarityEngine™ compares them using objective and diverse datapoints, allowing users to find the hospitals that are true peers.

Background

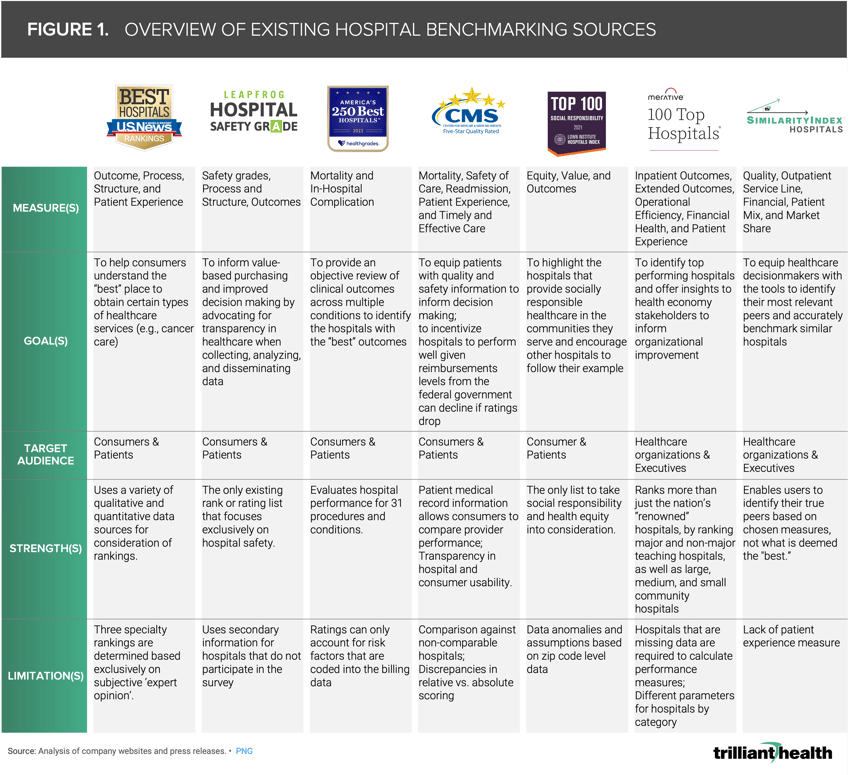

Most existing hospital rankings and ratings provide ordinal scores or ordered lists (i.e., best to worst) based in part on a variety of quality-centric measures, including HCAHPS, 30-day risk-adjusted mortality rate, and readmission rates. Over time, the “best” or “top” hospital lists have become an element in strategic planning even though they are targeted to consumers (Figure 1). Whereas the U.S. News & World Report rankings aim to help consumers understand the “best” place to receive certain types of healthcare services, Leapfrog Group scores hospitals on patient safety.2,3 Healthgrades provides a review of clinical outcomes across multiple conditions to identify the hospitals with the “best” outcomes.4 While CMS Care Compare is intended to educate patients and provide consumer-curated scores, it also is used to incentivize performance, with Federal reimbursement levels (i.e., Medicare, Medicaid) subject to change based on a hospital’s rating score.5 Merative, comparatively, is intended to educate healthcare executives on organizational improvement initiatives.6

For a more detailed version of Figure 1, click here.

As Figure 1 reveals, the existing ranking and ratings methods lack comparative elements, do not holistically benchmark hospitals beyond limited quality-based metrics, and rely heavily on subjective data and reputation-based surveys. As a result, hospitals and health systems make arbitrary and incomplete parallels between themselves and some of the nation’s “top” hospitals. In contrast, SimilarityIndex™ | Hospitals uses Euclidean geometry to compare hospitals nationally based on quality, outcomes, safety, financial performance, market share, hospital capacity, and distribution of services rendered. These categories can also be aggregated to provide a comprehensive comparison.

Analytic Approach

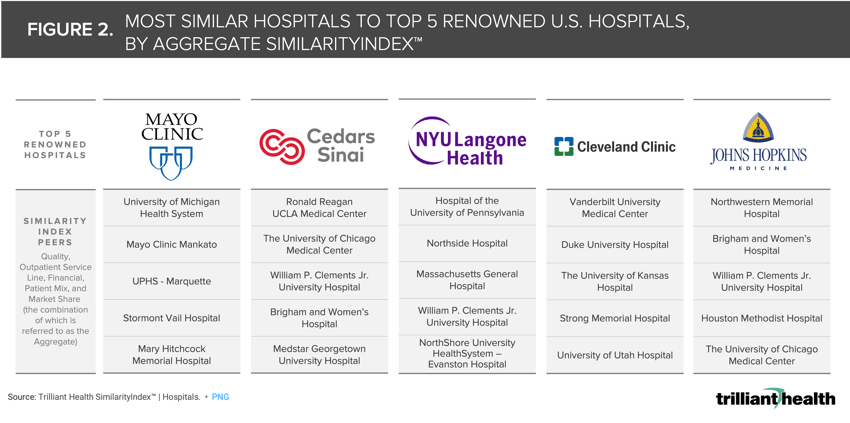

As an illustrative example, we identified five renowned U.S. hospitals that often appear at the top of existing hospital benchmarking lists. Leveraging the Aggregate SimilarityIndex™, we identified the five most similar hospitals to each renowned healthcare facility.

Findings

The same renowned hospitals appear on the U.S News & World Report year after year, leading stakeholders to incorrectly assume that they are peers. Applying Euclidean principles to objective data reveals that the five highest ranked hospitals in the 2022-2023 Best Hospitals Honor Roll are dissimilar in many respects. Conversely, William P. Clements, Jr. University Hospital, which is not on the Best Hospitals Honor Roll, is similar to Cedars-Sinai, NYU Langone Health, and Johns Hopkins Hospital.

While many well-known hospitals such as Mayo Clinic, Cleveland Clinic, and Cedars Sinai may be reputational peers, they are not relevant peers for thousands of other acute care hospitals. By leveraging a diverse range of metrics and methods, sourced from both proprietary and secondary data, SimilarityIndex™ | Hospitals enables decision makers to holistically compare hospitals beyond quality and outcomes alone. Accounting for factors such as services rendered, the hospital’s capacity, financial standing, overall market share, and patient mix is critical for identifying true hospital peers.

Use SimilarityIndex™ | Hospitals visualizer to select a benchmark hospital of your choice to index.

Thanks to Megan Davis and Katie Patton for their research support.

.png)

.png?width=171&height=239&name=2025%20Trends%20Report%20Nav%20(1).png)